ah, ok, this is optional step as first cpu is mostly the one with most load ![]() but it looks like you set it to cpu2 and later to 3 (or vice versa, as i see interrupts on tx for cpu0,2 and 3)

but it looks like you set it to cpu2 and later to 3 (or vice versa, as i see interrupts on tx for cpu0,2 and 3)

I did not attach statistics for the tests on the 6.16 kernel yet, I was thinking to figure out what I should do with the smp_affinity for ethernet TX IRQ which is not working as expected for me at this time. I do not understand why TX is able to use just only 1 CPU core, as it limits TX to 8Gbps.

Here are the test results with the IRQ stats without set-up of smp_affinity for the ethernet TX irq:

- iperf -P 4 -t 30 --full-duplex --client

[ ID] Interval Transfer Bandwidth

[ 1] 0.0000-30.0003 sec 22.5 GBytes 6.45 Gbits/sec

[ *1] 0.0000-30.0082 sec 20.4 GBytes 5.83 Gbits/sec

[SUM] 0.0000-30.0082 sec 42.9 GBytes 12.3 Gbits/sec

And here are the stats:

cat /proc/mtketh/hw_lro_stats

HW LRO statistic dump:

Cnt: RING4 | RING5 | RING6 | RING7 Total

0 : 0 0 0 0 0

1 : 9321 0 0 0 9321

2 : 22946 0 0 0 22946

3 : 7955 0 0 0 7955

4 : 15414 0 0 0 15414

5 : 35881 0 0 0 35881

6 : 6330 0 0 0 6330

7 : 14123 0 0 0 14123

8 : 1818869 0 0 0 1818869

9 : 283 0 0 0 283

10 : 0 0 0 0 0

11 : 0 0 0 0 0

12 : 0 0 0 0 0

13 : 0 0 0 0 0

14 : 0 0 0 0 0

15 : 0 0 0 0 0

16 : 0 0 0 0 0

17 : 0 0 0 0 0

18 : 0 0 0 0 0

19 : 0 0 0 0 0

20 : 0 0 0 0 0

21 : 0 0 0 0 0

22 : 0 0 0 0 0

23 : 0 0 0 0 0

24 : 0 0 0 0 0

25 : 0 0 0 0 0

26 : 0 0 0 0 0

27 : 0 0 0 0 0

28 : 0 0 0 0 0

29 : 0 0 0 0 0

30 : 0 0 0 0 0

31 : 0 0 0 0 0

32 : 0 0 0 0 0

33 : 0 0 0 0 0

34 : 0 0 0 0 0

35 : 0 0 0 0 0

36 : 0 0 0 0 0

37 : 0 0 0 0 0

38 : 0 0 0 0 0

39 : 0 0 0 0 0

40 : 0 0 0 0 0

41 : 0 0 0 0 0

42 : 0 0 0 0 0

43 : 0 0 0 0 0

44 : 0 0 0 0 0

45 : 0 0 0 0 0

46 : 0 0 0 0 0

47 : 0 0 0 0 0

48 : 0 0 0 0 0

49 : 0 0 0 0 0

50 : 0 0 0 0 0

51 : 0 0 0 0 0

52 : 0 0 0 0 0

53 : 0 0 0 0 0

54 : 0 0 0 0 0

55 : 0 0 0 0 0

56 : 0 0 0 0 0

57 : 0 0 0 0 0

58 : 0 0 0 0 0

59 : 0 0 0 0 0

60 : 0 0 0 0 0

61 : 0 0 0 0 0

62 : 0 0 0 0 0

63 : 0 0 0 0 0

64 : 0 0 0 0 0

Total agg: RING4 | RING5 | RING6 | RING7 Total

15010479 0 0 0 15010479

Total flush: RING4 | RING5 | RING6 | RING7 Total

1931122 0 0 0 1931122

Avg agg: RING4 | RING5 | RING6 | RING7 Total

7 0 0 0 7

HW LRO flush pkt len:

Length | RING4 | RING5 | RING6 | RING7 Total

0~5000: 40665 0 0 0 40665

5000~10000: 58007 0 0 0 58007

10000~15000: 1832450 0 0 0 1832450

15000~20000: 0 0 0 0 0

20000~25000: 0 0 0 0 0

25000~30000: 0 0 0 0 0

30000~35000: 0 0 0 0 0

35000~40000: 0 0 0 0 0

40000~45000: 0 0 0 0 0

45000~50000: 0 0 0 0 0

50000~55000: 0 0 0 0 0

55000~60000: 0 0 0 0 0

60000~65000: 0 0 0 0 0

65000~70000: 0 0 0 0 0

70000~75000: 0 0 0 0 0

Flush reason: RING4 | RING5 | RING6 | RING7 Total

AGG timeout: 1252 0 0 0 1252

AGE timeout: 0 0 0 0 0

Not in-sequence: 2638 0 0 0 2638

Timestamp: 0 0 0 0 0

No LRO rule: 108465 0 0 0 108465

This configuration is for me the best one. The optimalisation of TX would be nice, there is about 1,4Gbps unused, which is about 14%.

Which iperf version do you have? I had limited throughput with default from debian. LRO needed iperf2 in my tests to reach the 9.4 gbit (max)

$ iperf --version:

iperf version 2.1.8 (12 August 2022) pthreads

After dealing with the nfs error:

$ journalctl -b -u nfs-server

Jul 22 06:14:45 nas systemd[1]: Dependency failed for nfs-server.service - NFS server and services.

Jul 22 06:14:45 nas systemd[1]: nfs-server.service: Job nfs-server.service/start failed with result 'dependency'.

I was re-compile kernel with the nfs directly in the kernel instead of module, and nfs-server started working.

- dd if=/dev/zero of=/mnt/nvme/test.img bs=10M count=1000 oflag=dsync

10370416640 bytes (10 GB, 9.7 GiB) copied, 39 s, 266 MB/s

-

dd if=/dev/zero of=/mnt/raid0/test.img bs=10M count=1000 oflag=dsync

10349445120 bytes (10 GB, 9.6 GiB) copied, 61 s, 169 MB/s -

dd if=/mnt/nvme/test.img of=/dev/null bs=10M iflag=direct

9961472000 bytes (10 GB, 9.3 GiB) copied, 14 s, 711 MB/s

- dd if=/mnt/raid0/test.img of=/dev/null bs=10M iflag=direct

9856614400 bytes (9.9 GB, 9.2 GiB) copied, 13 s, 757 MB/s

Results between 6.12.32 without rss/lro and 6.16.0 with rss/lro looks not as big as expected, but, I thing that in case of using BPI-R4 as a NAS server:

- writing to NAS means reading data by ethernet/sfp which utilise rss/lro functionality, but is probably limited by

-

- write to the NVME and SATA drives is limited because of the technology - these drivers have to clear the space before writing a new data which makes writing slower, and if I remember it correctly, it reads the data to verify succesfull write.

- reading from NAS drives is much more faster, as expected, and I am little confused here, because I did not set-up smp_affinity for TX frames.

It should make sense, but I have to ask: Does this means, that linux core utilise all cores for TX frames by default?

edit: nfs-server error added

I did test with the 3GB of ram drive:

- dd if=/dev/zero of=/mnt/ramdrive/test.img bs=10M count=300 oflag=dsync

2883584000 bytes (2.9 GB, 2.7 GiB) copied, 5 s, 575 MB/s

- dd if=/mnt/ramdrive/test.img of=/dev/null bs=10M iflag=direct

2170552320 bytes (2.2 GB, 2.0 GiB) copied, 2 s, 1.1 GB/s

Why is writing “only” 50% of the maximum speed?

I assume that nobody knows even an idea. I will perform similar tests on new compiled kernels in the future.

Hello again @frank-w ,

I am wondering how to persist rss & lro setup? I created a script to automate it and I can create “one shot” service to execute it, but are there any other better options?

When I was playing with it, I realize, that in my performance tests dd with the parameter --oflag=dsync lower the performance a bit, therefore I will update these test soon.

I don’t know any specific setting via systemd or any other init. So i guess creating a acript and adding it as postup/predown into the networkd units is the best way.

ls /sys/class/net/sfp-lan/queues/ rx-0 tx-0 tx-1 tx-10 tx-11 tx-12 tx-13 tx-14 tx-15 tx-2 tx-3 tx-4 tx-5 tx-6 tx-7 tx-8 tx-9 one rx vs 10 tx

It is not related to the RSS/LRO, but as I am testing everything on nvme or raid arrays, and next tests only on this raid 6 array from these days, I would like share with everyone interested thinking about using bpi-r4 as its own nas. Here is the on-going re-check performance of my raid6 array:

$ cat /proc/mdstat

Personalities : [raid4] [raid5] [raid6]

md127 : active raid6 sde[4] sdf[6] sdd[2] sdb[5] sdc[0] sda[1] sdg[7]

14650675200 blocks super 1.2 level 6, 512k chunk, algorithm 2 [7/7] [UUUUUUU]

[==============>......] check = 74.8% (2193851400/2930135040) finish=117.3min speed=104553K/sec

bitmap: 0/22 pages [0KB], 65536KB chunk

I have to say, that (as I created the array about more than 10 years ago) the array have probably NOT well optimalised stripe and stride, therefore performance of re-sync and working in degraded mode is lower. But I think that re-check is going quite fine.

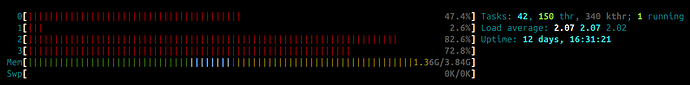

Loadavg is about 2:

And kernel info:

$ uname -a

Linux nas 6.16.0-rc1-bpi-r4-rsslro #1 SMP Wed Aug 13 11:33:51 CEST 2025 aarch64 GNU/Linux

working with a kernel built from 6.16-rsslro. I have two questions.

a) ethtool -K eth0 lro on gets me an error “Could not change any device features”, is there some magic I need first? a kernel CONFIG_* flag? does the order in which ethtool flags are set matter?

b) if I try to set more than two RSS rules [e.g., use loc 2 or loc 3] I get EINVAL. Do I just need to choose the two dst-ips that matter the most for performance, or is there a way I can enable RSS for each VLAN?

Afair the “lro on” via ethtool was dropped at some state because needs to be defined by dest-ip. So afaik lro is working on layer 3 (mapping fastpath via ip header).

I have only tested with 1 rule,but afaik 4 should be possible

running debian bookworm

root@ratatosk:~# dpkg -l |grep ethtool

ii ethtool 1:6.1-1 arm64 display or change Ethernet device settings

root@ratatosk:~# ethtool -n eth0

4 RX rings available

Total 1 rules

rxclass: Cannot get RX class rules: Message too long

RX classification rule retrieval failed

root@ratatosk:~# ethtool -n ethLAN

4 RX rings available

Total 2 rules

Filter: 0

Rule Type: TCP over IPv4

Src IP addr: 0.0.0.0 mask: 0.0.0.0

Dest IP addr: 192.168.88.253 mask: 255.255.255.255

TOS: 0x0 mask: 0x0

Src port: 0 mask: 0x0

Dest port: 0 mask: 0x0

Action: Direct to queue 0

Filter: 1

Rule Type: TCP over IPv4

Src IP addr: 0.0.0.0 mask: 0.0.0.0

Dest IP addr: 192.168.80.253 mask: 255.255.255.255

TOS: 0x0 mask: 0x0

Src port: 0 mask: 0x0

Dest port: 0 mask: 0x0

Action: Direct to queue 0

root@ratatosk:~# ethtool -N ethLAN flow-type tcp4 dst-ip 192.168.100.253 action 0 loc 2

rmgr: Cannot insert RX class rule: Invalid argument

is there something I did wrong in my attempt to push the rules?

Maybe try the previous version afaik 6.15-rsslro. maybe i/we did something wrong while porting after the sram/irq changes.

testing with 6.14-rsslro.

note there is no 6.15-rsslro branch.

Can try testing with 6.14-rsslro2 next.

root@bananapir4:~# ethtool -N eth0 flow-type tcp4 dst-ip 192.168.89.92 action 0 loc 0

root@bananapir4:~# ethtool -N eth0 flow-type tcp4 dst-ip 192.168.89.91 action 0 loc 1

root@bananapir4:~# ethtool -N eth0 flow-type tcp4 dst-ip 192.168.89.90 action 0 loc 2

rmgr: Cannot insert RX class rule: Invalid argument

root@bananapir4:~# ethtool -n eth0

4 RX rings available

Total 2 rules

Filter: 0

Rule Type: TCP over IPv4

Src IP addr: 0.0.0.0 mask: 0.0.0.0

Dest IP addr: 192.168.89.92 mask: 255.255.255.255

TOS: 0x0 mask: 0x0

Src port: 0 mask: 0x0

Dest port: 0 mask: 0x0

Action: Direct to queue 0

Filter: 1

Rule Type: TCP over IPv4

Src IP addr: 0.0.0.0 mask: 0.0.0.0

Dest IP addr: 192.168.89.91 mask: 255.255.255.255

TOS: 0x0 mask: 0x0

Src port: 0 mask: 0x0

Dest port: 0 mask: 0x0

Action: Direct to queue 0

root@bananapir4:~# uname -a

Linux bananapir4 6.14.0-rc1-edge-filogic #1 SMP Sun Mar 16 16:40:16 UTC 2025 aarch64 GNU/Linux

It will let me use ethtool -N eth0 flow-type tcp4 dst-ip 192.168.89.90 action 1 loc 0 ← note sending to queue 1

but ethtool -n eth0 does not show the rule, so I can’t prove [trivially] that it works.

6.14-rsslro2 compiling with the same config I used for 6.14-rsslro & 6.16-rsslro did not produce a kernel that sees the network interfaces. it’s 4AM here so I may not fix the config til later.

using the mt7988a_bpi-r4_defconfig [admittedly filtered through the Armbian build framework] still has not produced a usable kernel. it’s now quarter to 5AM EDT.

You should use defconfig in the same branch. In 6.16 the sram was changed from allocating in ethernet driver to dedicated sram-mmio driver. I guess this is missing when you use older defconfig.

Not sure if i tested the 6.16 tree again after rebasing rss/lro patches on the irq/sram changes.